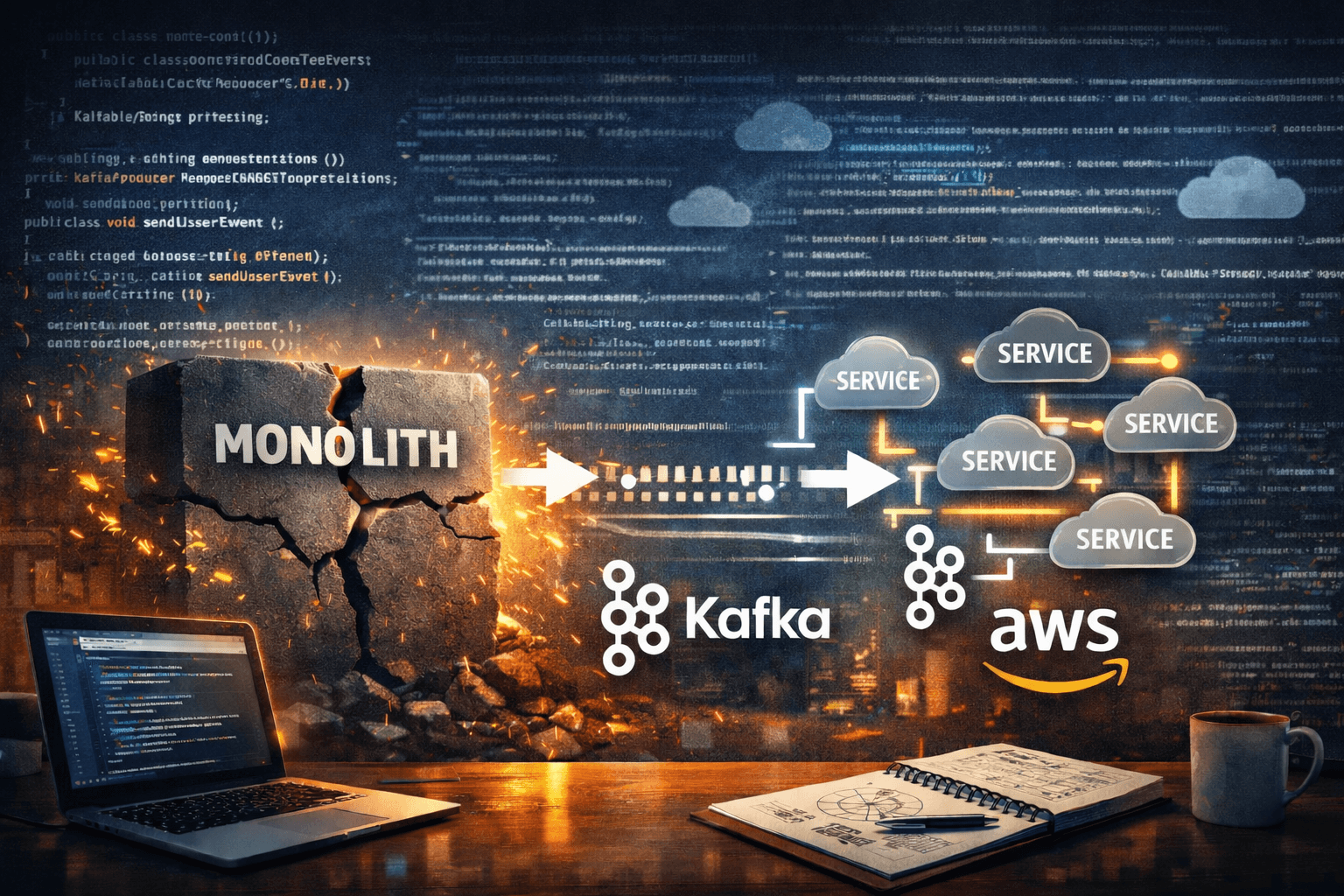

Inside My Monolith-to-Microservices Migration (Part 2): Aggregation, Resilience4J, and Production Deployment

How I integrated, hardened, and deployed the system — and what I still want to improve

Hey everyone! Welcome back to Java With John. Today I'll be sharing how I finished my monolith-to-microservice refactor of my SpringForge application. In doing so, you'll see what I learned about concurrency and Resilience4J, which I used to bring all the pieces together, along with how I made the final deployment of my API server.

Bringing the Pieces Together

In part 1 of this series, I focused on why I started this migration and how I separated my code generation services within my API server into AWS Lambda functions. At that point, what I didn't figure out yet was how to bring everything together by aggregating their results. This would involve waiting for Kafka messages containing the generated code, saving the generated code to a zip file, and implementing retry and timeout mechanisms in case something goes wrong with the code generation.

Awaiting the Kafka Messages

To await the Kafka messages containing generated code, I implemented a strategy using CountDownLatch objects. These allow you to block while awaiting a countdown to finish. The algorithm goes as follows:

From the user's

CodeGenerationRequest, get the number of classes we need to generate using our Lambdas.Using this number, initialize a

CountDownLatchfor us to count down all the classes we generate. This will be stored in a map with a correlation ID for the project as the key, and theCountDownLatchas the value.Send requests to generate the entities, controller advice, and repositories with the correlation ID in the message. The Lambdas handling the entity and repository generation will produce Kafka messages to generate the other classes (i.e., DTOs, services, controllers). All generated classes will echo back the correlation ID, so we can group them as part of the same project.

Await for the countdown to finish and return the generated classes using

CompleteableFuture#supplyAsync. This completable future will return the generated classes once they are all completed.Consume messages from the

generated-classtopic on the API server. For each class we receive, we fetch the appropriateCountDownLatch(based on the correlation ID) and call theCountDownLatch#countDownmethod to advance the countdown. We'll also store the generated classes into map that we can use to keep track of and return the final result.Once the countdown is over, fetch the generated classes from the Map.

Write the generated classes into the zip file and return it.

Each time a user requests a project be generated, we run this algorithm on a separate thread that writes the zip file to a temporary storage location. While this is going on, they can call a GET /status/{correlationID} endpoint to get the status of the project generation and later call a GET /project/{correlationID} endpoint to get the final zip contents.

Resilience And Error Handling

With that algorithm figured out, now comes the issue of error handling, which can be tricky in this distributed environment. This is where Resilience4J can come to play. As mentioned above, we await the final code generation result in the API server using a CompletableFuture. Using Resilience4J, we can configure retry logic if the CompletableFuture times out with a TimeoutException.

Aside from that, we need a way to handle errors from the code generation Lambdas. Each of them sends messages (containing a project correlation ID) to an error topic if any exception happens. When the API server consumes a message from this topic, it will complete the countdown early and clear the generated classes. When the CompletableFuture completes (since the countdown is finished), we check if the expected number of classes is in the result. If not, then we throw an exception for us to retry on using our Resilience4J retry configuration.

Idempotency

While these two approaches sound great and straightforward, one thing we need to be aware of with this retry logic is the possibility of duplicate class entries. Let's say that we get an error from one of our Lambdas and need to retry the entire code generation flow. At the same time, there may be a Lambda still processing for the previous attempt that sends a message with its generated class result. In that case, because of the timing of things, there's a possibility of getting duplicate classes: one from the previous code generation attempt, and one from the retry.

To prevent this from happening, I decided to store the generated classes in a Map<String, Map<String, GeneratedClass>> data structure where the key is the project correlation ID, the key of the inner map is the name of the class, and the value of the inner map is the generated class itself. This way, we can ensure idempotency in that when we insert generated classes for a project, if we find a duplicate class name, we will override the previously saved result.

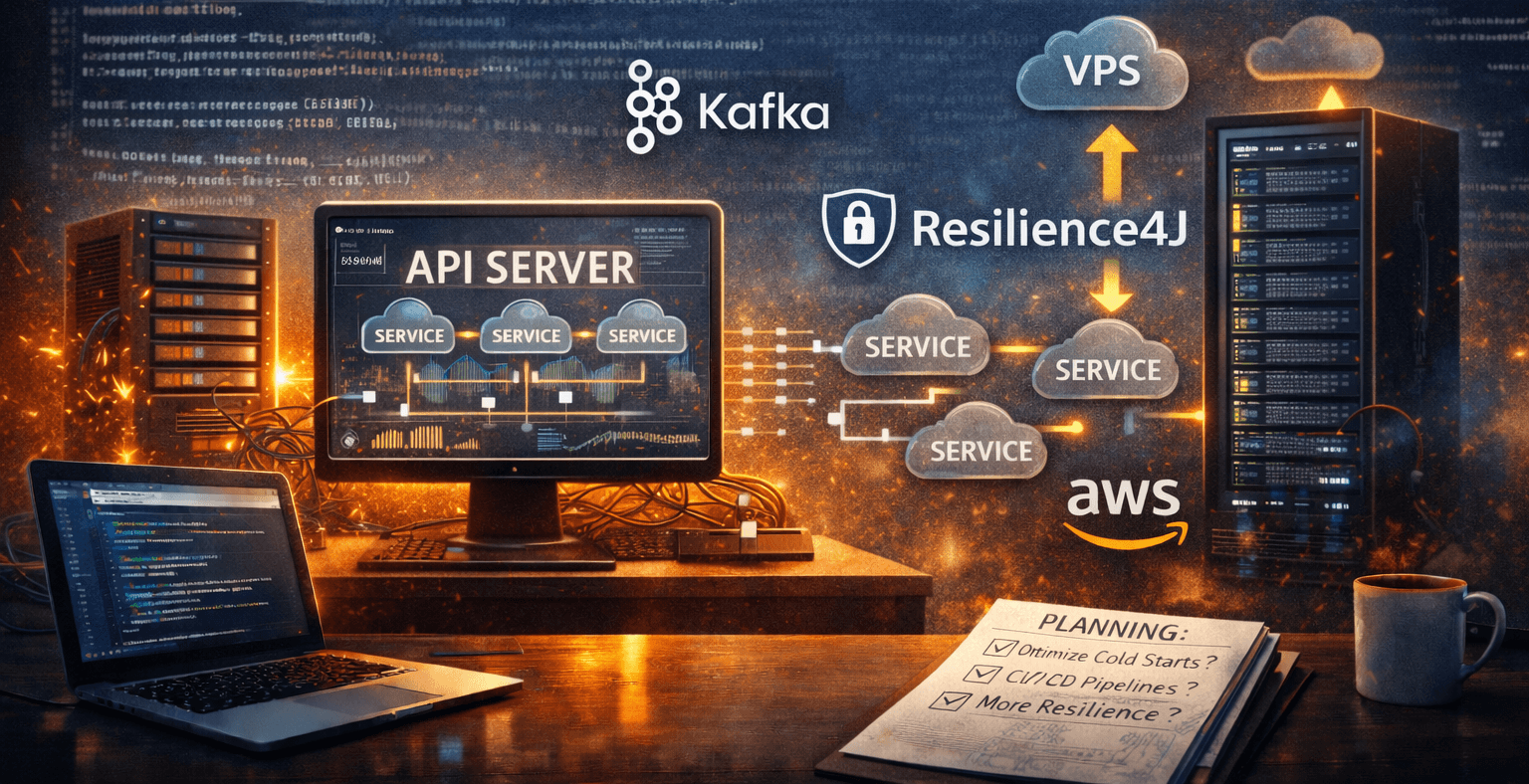

Re-deploying Our API Server

With all these changes added, one further problem arises when it comes to our API Server deployment. Previously, I had deployed my API server using Heroku. While it worked fairly well for the previous architecture, because Heroku doesn't keep the API server always-on, we might not always be ready to consume our Kafka messages. Thus, I decided I need to migrate my API Server to an always-on VPS. For this purpose, I chose Contabo as an affordable option that I can easily set up and connect to. From there, to deploy the API server, I:

Wrote a new Dockerfile to build the Docker image on the VPS

Logged in to the Contabo VPS as the root user

Cloned my Git repository containing my backend

Created a .env file containing the production secrets (ensuring it is ignored by Git)

Wrote a Docker Compose file to set the .env file, set the volumes, and handle the port mapping

Started the API server using the Docker Compose CLI tool.

Added a DNS A record for the VPS using my domain provider's management tool. This will map the backend to an

api.springforgeapp.comdomain.Set up an Nginx reverse proxy on the VPS to forward HTTP requests to my API.

Used the nginx certbot tool to set up SSL.

What I Could Still Improve

While this migration was officially a success, there are still some things I can improve on from this point:

Creating Proper CI/CD Pipelines: Instead of manually building and uploading jars to AWS Lambda for my code generation services, I should find a way to use GitHub actions to automate this when I merge a branch into main. I could also automate copying my backend code to the VPS instead of logging in and pulling from GitHub every time.

Cold Start Optimization: While the new architecture gives me scalability and maintainability gains, when the AWS Lambdas cold start, the code generation process is noticeably a lot slower (although on warm starts it performs quite well). To counteract this, I need to research configuration options to reduce the impact of cold starts while staying on budget.

Consider Using Redis: For the temporary storage of generated classes of a project, I could potentially use Redis to avoid using up server memory. Although I should only consider this in the future as a possible optimization if I have more of a user base.

Research and Profile Other Resilience Strategies: While I have a working resilience strategy, I need to see if it works well in production and if there are other resilience mechanisms I can add, like a Bulkhead.

Conclusion

In this blog post, we went over how I completed my monolith-to-microservice journey by creating a working and resilient algorithm for aggregating distributed processing from my microservices. Using CountDownLatch objects, we can await the expected number of results we need from our microservices. With Resilience4J, we can create retry logic to retry our distributed processing if any error or unexpected result occurs. Lastly, using Docker, we can easily set up an API Server deployment on a cheap, always-on VPS like those provided by Contabo. With that, I hope you found my monolith-to-microservice journey interesting and useful for your future projects. Happy coding!