Inside My Monolith-to-Microservices Migration Using Spring, Kafka, and AWS

Breaking the monolith, one service at a time

Hey everyone! Welcome back to Java With John. Today, I’ll be sharing how and why I’m migrating my project, SpringForge (an app for generating simple Spring Applications), from a Monolithic to a Microservice architecture.

Uncovering the Monolith

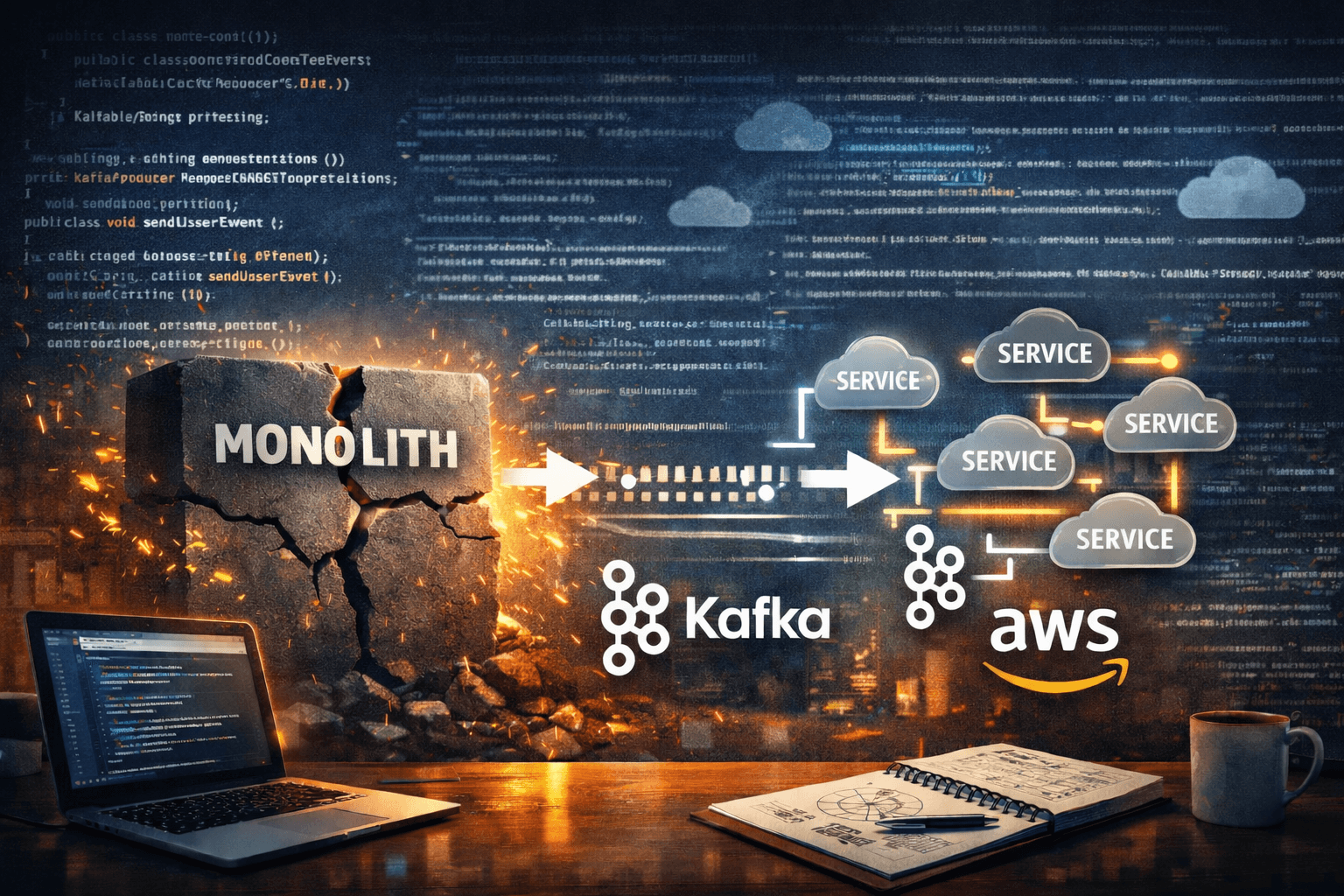

To understand the methods and motivations behind this architectural shift, I first need you to understand how the current monolithic approach to my application works. The magic behind SpringForge involves a group of “generator” service classes, each responsible for generating code for different components of a Spring Application (i.e., controllers, services, entities, etc). These service classes operate together under the same Spring Application, are dependent on code generated by each other, and are orchestrated by a single APIGenerationService. The architecture behind this approach looks like so (with some details being left out for simplicity):

Monolithic Struggles

From the architectural diagram, it becomes immediately clear that orchestrating all this in code (especially in a single service) is cumbersome and messy. I can say that the code for this confirms it, with there being many moving parts and tight coupling that make it hard to write cleanly and maintain.

If we consider scalability and parallelism, there are further issues this design brings. For context, I noticed that this workflow can be parallelized per entity. From the architecture, you’ll see that classes get generated from Entity → DTO → Repository → Service → Controller for each entity being generated. Therefore, we can run this flow in parallel per entity in the project. The issue with this, though, is that since all these services fall under the same Spring application, I can see there being limits to parallelism based on the servlet thread pool used to handle HTTP requests. Aside from that issue, we also can’t scale the services independently from the controller entry point and each other, depending on the load on each of these components.

In Comes Microservices

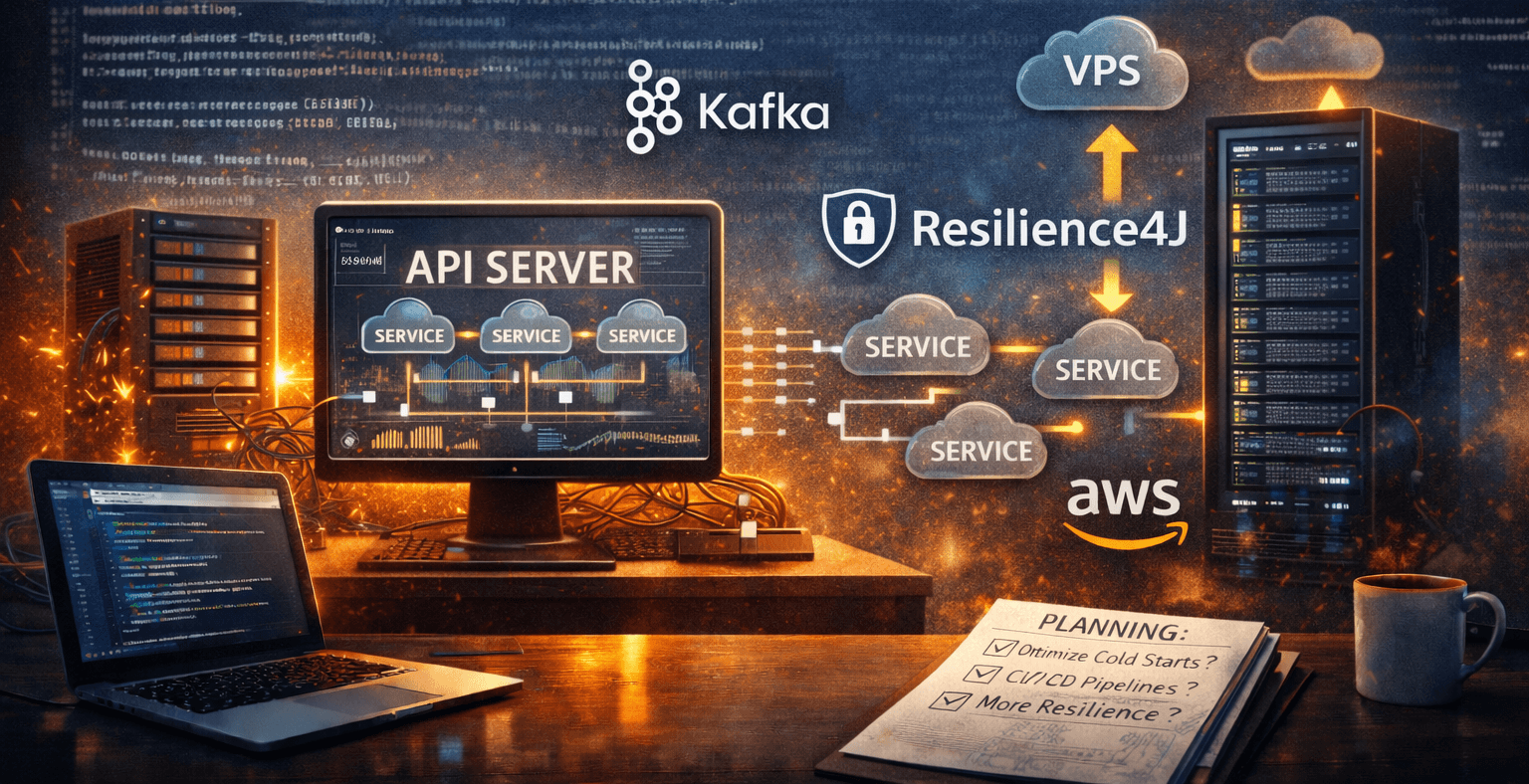

From these issues, I got the idea of creating an event-driven microservice architecture as my solution. Each service will be its own deployable unit, responsible for generating classes and requesting that other classes be generated based on its results. When the user wants to create a project, events will be sent to generate the entities, DTOs, repositories, services, and lastly, controllers. To group these generated classes as a single user’s project, each message will contain a correlation ID that the API server will use to aggregate the results into a project zip. Finally, to handle the exchange of these messages, I’ve decided to use Apache Kafka (with Avro for message schemas) for durable and reliable message queueing, and to improve my skills with it for my current job.

With this new approach, we can directly solve many of our issues in the monolithic architecture. By having separately deployable services per code generator, each can have its own threads/processors, greatly improving scalability and flexibility. With separate codebases per service, we also have better modularity and development flexibility on how these services work (e.g., if I wanted to migrate them to a different language). Lastly, with some orchestration handled within each microservice, I can potentially clean up much of that messy logic in the API.

The new architecture would look more like this:

The Implementation

Tools

In my work-in-progress implementation of this new architecture, I had to learn and use many tools and services, including:

AWS Lambda for easy microservice deployments with auto-scaling functionality

A Kafka Cluster hosted on Cloud Clusters for a reasonably priced messaging solution

Apache Avro for message formats, serialization, and deserialization

Digital Ocean for hosting a VM running an open-source Avro schema registry

The Refactoring Processes

The refactoring process has been a long journey, but with some good processes to guide me along the way, it has been fairly smooth despite the tedious effort required. For each generator service, I:

Created a new Maven module

Copied the service class to the new module along with its tests

Created Avro files with new event messages containing the required data

Generated classes from my Avro files using a Maven plugin

Refactored the code to use the new Avro-generated classes

Ensured the tests for the service still passed

Added message handling logic for the new microservice

Deployed the service to AWS Lambda with the necessary Kafka connection properties

Tested sending and receiving messages from the service.

To help with this process, I created a generator-common module, which provides abstractions for many of the common message handling tasks for the microservices. Additionally, I wrote simple classes to test sending and receiving Kafka messages from them.

What's Next

To finish this refactor, I still have some work to do when it comes to connecting my microservices through messages containing their processing results. I also need to put it all together in the original Spring application by sending the initial Kafka message and aggregating the final results. Lastly, I need to add some forms of fault tolerance (possibly with something like Resilience4j) to this entire workflow. For example, if there is a transient communication error or an exception, I need to have an error topic for the API to report errors or retry some of the code generation.

Conclusion

With that, I hope I gave you great insights from my microservice migration journey, including the architectural decisions, tools, and workflow that’s making this migration successful so far. Stay tuned for more about this migration in a future blog post. Happy coding!